Crypto Bug Bounties: Getting Paid for Hacking Blockchain

Before blockchain there was Netscape, Google, and Heartbleed. Now there is Immunefi, ten-million-dollar payouts, and smart contracts waiting to be picked apart.

The stereotype evoked by the word "hacker" is astonishingly resilient: a young guy in a black hoodie, staring darkly at a screen in an intensely lit room, stealing people's passwords who put too much trust in the internet. That image has persisted through decades of real-life security culture because it's a convenient way to upscale something complex into a villain — and villains are always useful. What you generally hear less about is that some of the best hackers are on the other side of that transaction, finding holes before the crooks do (and getting paid for their trouble). Its practice has a name, an institutional memory and a professional structure dating back several decades before the conception of blockchain.

The original modern styled, organized bug bounty program was started on October 10, 1995 by Netscape. The company was working on Navigator 2.0, its then-leading browser, and had made a public call: find a security bug, responsibly disclose that vulnerability to the company, and get paid for your efforts. While the budget was small by today's standards — $50,000 shared over an entire show — the idea itself was truly original. Netscape was, in effect, admitting publicly that its software had vulnerabilities it couldn't detect internally and that outsiders might be better positioned to discover them. More than the money, it was what that implicit admission signified.

This got off to a slow start through the 2000s and accelerated when the big platforms joined in. In 2010 Google announced its Vulnerability Reward Program which today alone would pay out $12,000,000+ to researchers and more than $50,000,000 cumulatively to date. It was not until 2013 that Microsoft followed the trend with an official program, and Apple — which had long resisted the idea — finally opened a public program of its own in 2020. By the mid-2010s, bug bounties were commonplace for any technology company serious about its security, with services such as HackerOne and Bugcrowd emerging to facilitate the programs at scale by pairing companies with freelance security researchers who undertake hunting for vulnerabilities full time.

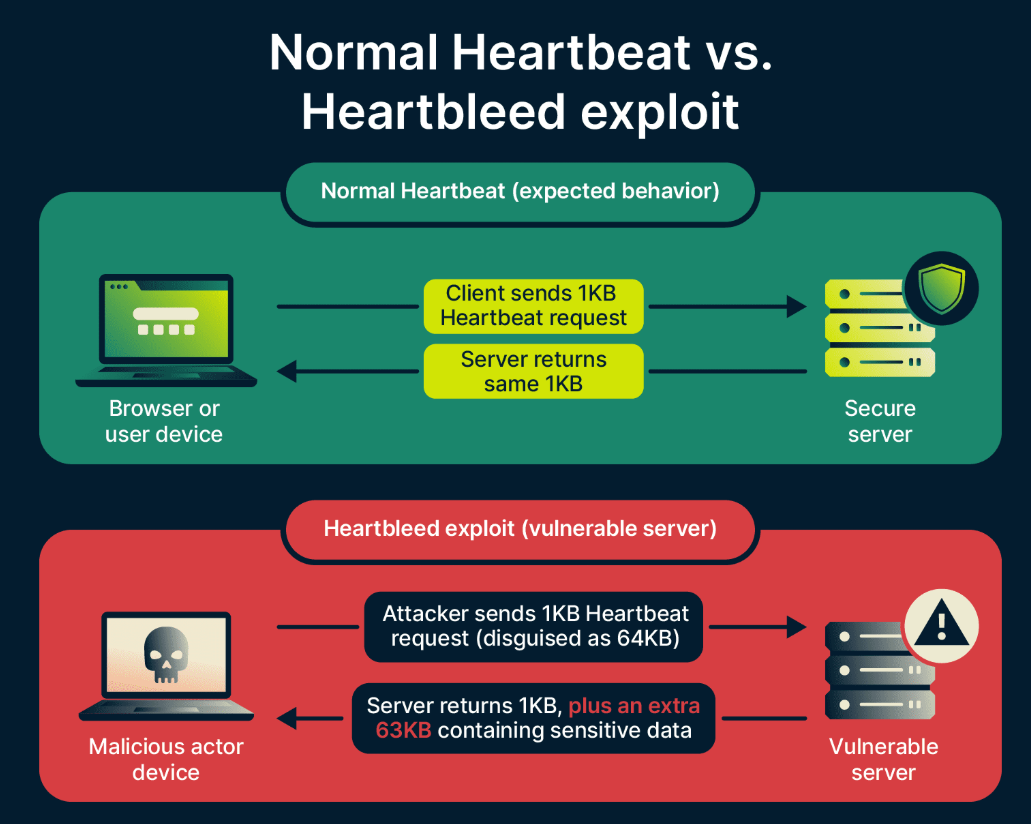

However, not all major security discoveries took place in a formal bounty program, and some of the most consequential illustrated what can happen when they don't. Back in 2014, two teams — Neel Mehta of Google and the team at Finnish firm Codenomicon — independently revealed a serious flaw in OpenSSL, the cryptographic library that undergirds a large portion of all internet-encrypted traffic. This newly discovered flaw, referred to as Heartbleed, enabled an attacker to read memory from a server (which they shouldn't have been able to access), in clear text exposing private keys, credentials used by tens of thousands of services and user data. No bug bounty was there to claim. While the vulnerability was reported responsibly, it spent more time exposed between discovery and patching than any of us would like to think about, and the follow up amount to a months-long global scramble for everything from software patches, content updates and even forwards. The counterfactual — what if government intelligence service or criminal operation discovered it before — was discomforting enough to hasten the implementation of structured disclosure programs throughout the industry.

Why Crypto Needs White Hats More Than Most

Thus the security problem in blockchain is structurally different (and fundamentally more complex) than the average software security problem, which matters when it comes to explaining why bug bounties have become such a central piece of the landscape here.

In traditional software, a vulnerability is severe but limited. The compromised web application of a bank might leak data or trigger fraud, but the damage is contained in a system with laws, insurance, fraud detection and the ability to reverse illicit transactions. The money has a paper trail. An attacker is not working in a vacuum.

DeFi loss scenarios, then: a successful (or not) exploit plays out in nearly every dimension differently. Within seconds, all the funds in a protocol can be drained if an intruder exploits a timing flaw in the smart contract. If successful, the attacker has obtained cryptocurrency that is not only difficult to trace, but almost impossible to recover without the cooperation of its owner. Nobody can undo the transaction because there is no central authority. At any point in time, billions of dollars sit immutably in these publicly readable and autonomously behaving smart contracts. Your code is your product, and your product is a vault. It's first come, first serve for most every mechanism, with the only recourse the unbreakable record on-chain.

This is what make the spear versus shield dynamic in crypto feel so urgent. From the moment you deploy any protocol that has value forks as an attack vector from day 1. The attackers are not disorganized. Some are linked to nation states, some are advanced criminal enterprises, and many others are simply holding the map to amorality and lodgings in Solidity; they have cash on hand and watching like hawks for what is deployed. Waiting for users to churn due to security issues is not a sustainable business strategy. No, the only option is to find those vulnerabilities before others do — and hire people that are talented enough to find it.

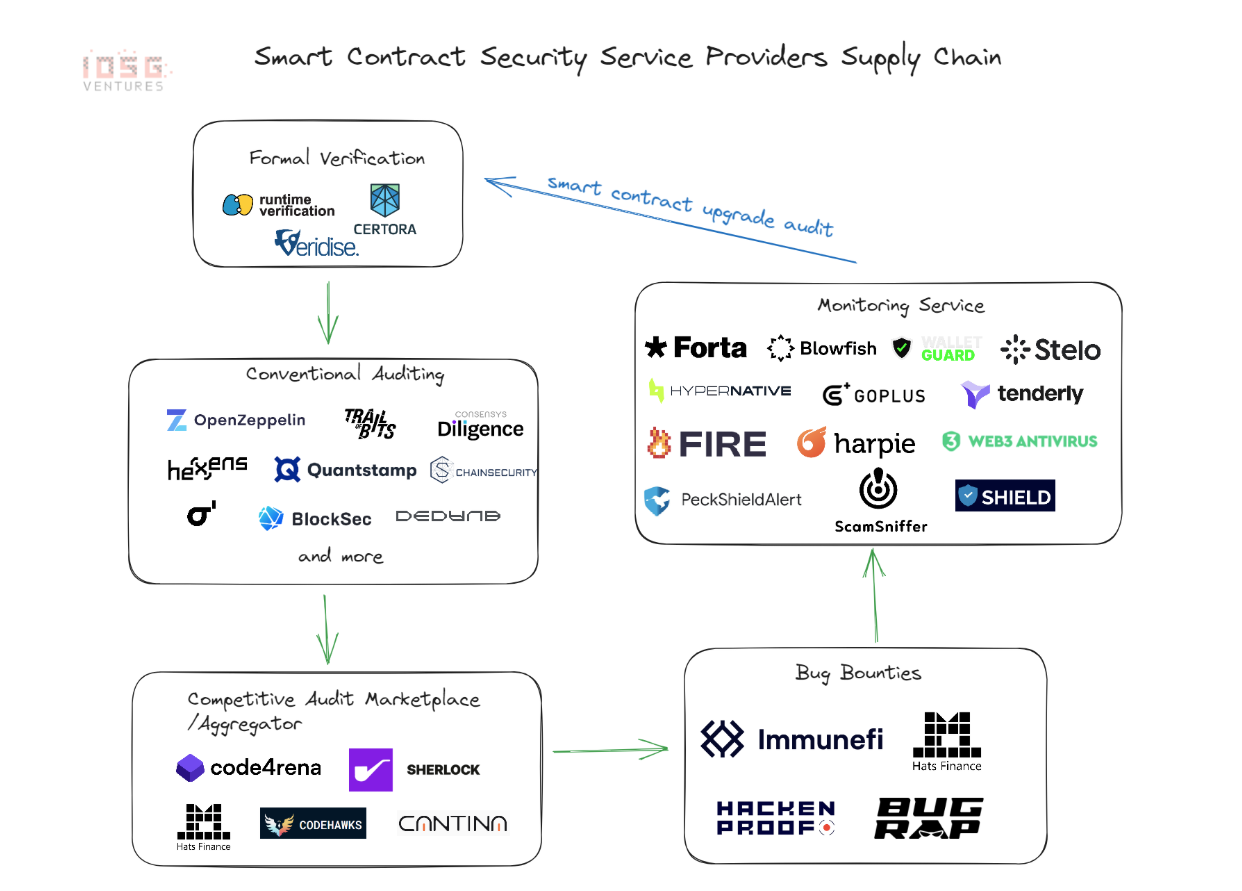

Auditing and penetration testing — getting a security firm to run over the code before you deploy it — is needed, but not at all sufficient. Audits are typically time-boxed and performed in teams of finite size. A "smart" contract system can have millions of potential paths for interactions and an audit team under a time constraint will cover the more obvious ones. These bug bounty programs take the search to a much wider horizon, and are working against a global pool of researchers with different backgrounds, different mental models and an indefinite time. A combination of both — comprehensive internal audit before mainnet, then a standing bounty program — is the standard takeaway for protocol security minded these days.

CEO Math Between a Thousand Dollars or A Million

The economic rationale for bug bounty programs is straightforward enough to not require explanation; in practice though it does because the expense is realized now, the savings are theoretical and spread out over long period of time.

A protocol with a $10,000 bounty on critical vulnerability spent $10K on its security. This is the same protocol that would reveal no vulnerability (we just saw a malicious actor exploit this), and might now lose its life savings, tens of millions all gone rapidly with so much more complexity to worry about in fact generally losing operations entirely still. The bounty was not an expense — it was a pre-emptive insurance payout before the insured event ever happened. No amount of arguing grants the insight needed to explain this math pre-launch — every CEO who has been through a major exploit understands it viscerally. Those that haven done it only consider security spend as just one more operational cost to be minimized in order to check the compliance box.

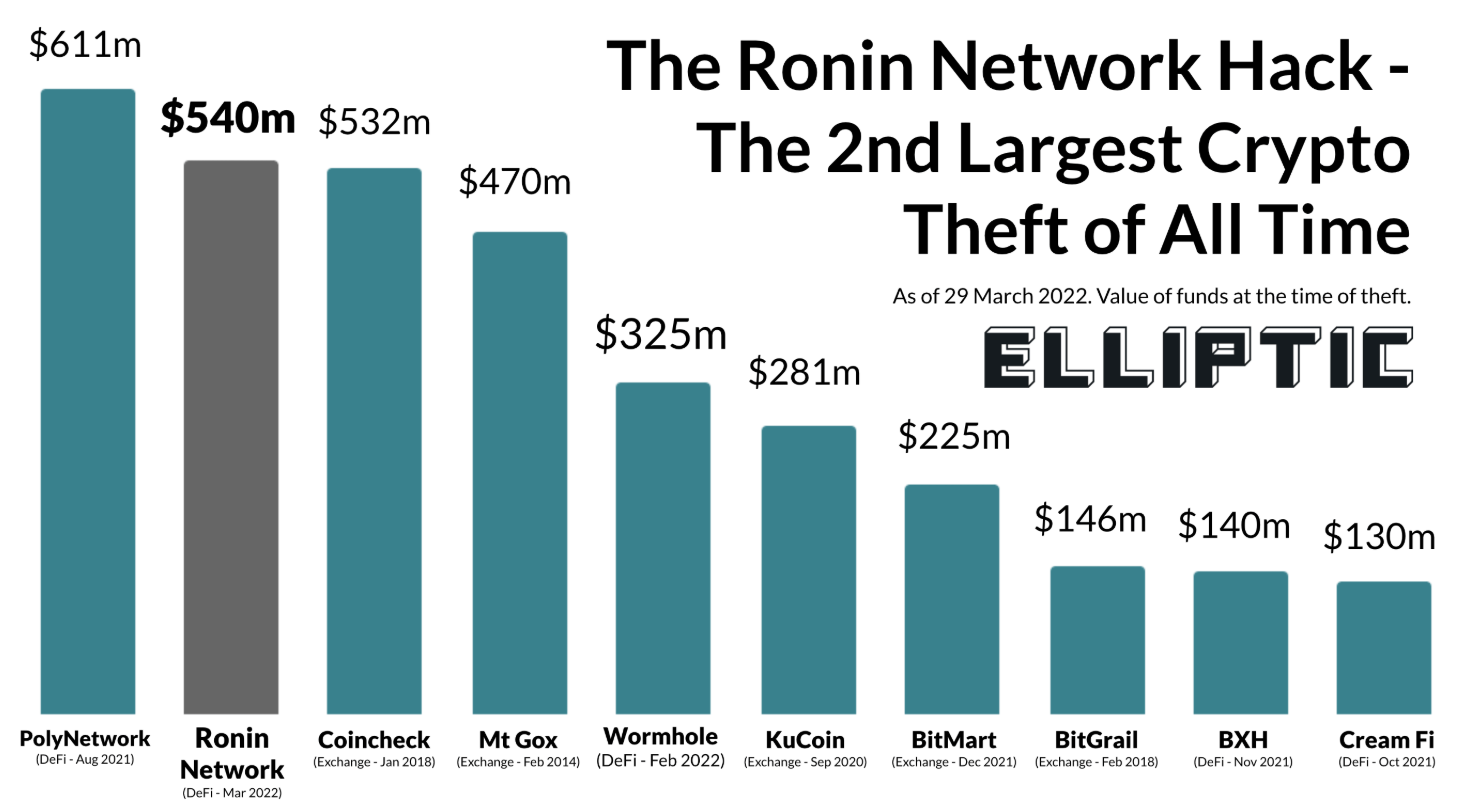

Cost data is provided by the history of DeFi exploits. As an example, in 2022 alone, the Ronin Network bridge suffered a $625 million loss. And a different bridge, Wormhole, was hacked in January at the cost of $320 million. Nomad Bridge — $190 million lost as every user could empty the protocol once the initial attack pattern was determined — a vulnerability that may have cost only $50,000 in bounties to discover and close. These are not edge cases. These are the official record of what takes place in a high-value environment where finding red teaming ‘holes’ is very well rewarded and there’s zero cost to exploitation.

The lesser-mentioned risk actually goes the other way. A protocol that opens a bug bounty program before it has sufficiently set up for one is effectively paying an open invitation to find everything its internal team missed — and if the codebase is poorly audited, that could well be just about everything. For example, a $100,000 cap training that starts up against a contract system with twenty critical vulnerabilities could suffer disclosures at the same time that empty out the entire bounty budget in days; meanwhile rendering the protocol not functioning securely. Imagine this scenario allowing us to say many of the early DeFi projects landed in roughly this spot: a bounty program is publicly announced, and charges begin pouring in that reveals the internal audit was an overview; however, remediation costs quickly outpaced what the team had set aside for their entire security approach. The moral of the story isn’t that bug bounties are risky — it's that they expect a minimum standard of code quality. Protocols find out their worst problems in the worst way possible by launching a bounty program as a replacement for substantive internal security work rather than an addition to it.

The finding of those takeaways has resulted in the following real world sequence: internal review, one or more external audits by trusted firms, and then production with a standing bug bounty. Others incorporate an additional measure of staged rollout — capping the protocol maximum value-at-risk for a defined time while bounty is live, and only extending liquidity once no critical failures have arisen. This is slower and often costlier than simply shipping. The alternative is occasionally catastrophic.

Ecosystem: platforms, programs and record payouts

Crypto bug bounty infrastructure has matured significantly since the early days of informal disclosure emails and ad hoc rewards.

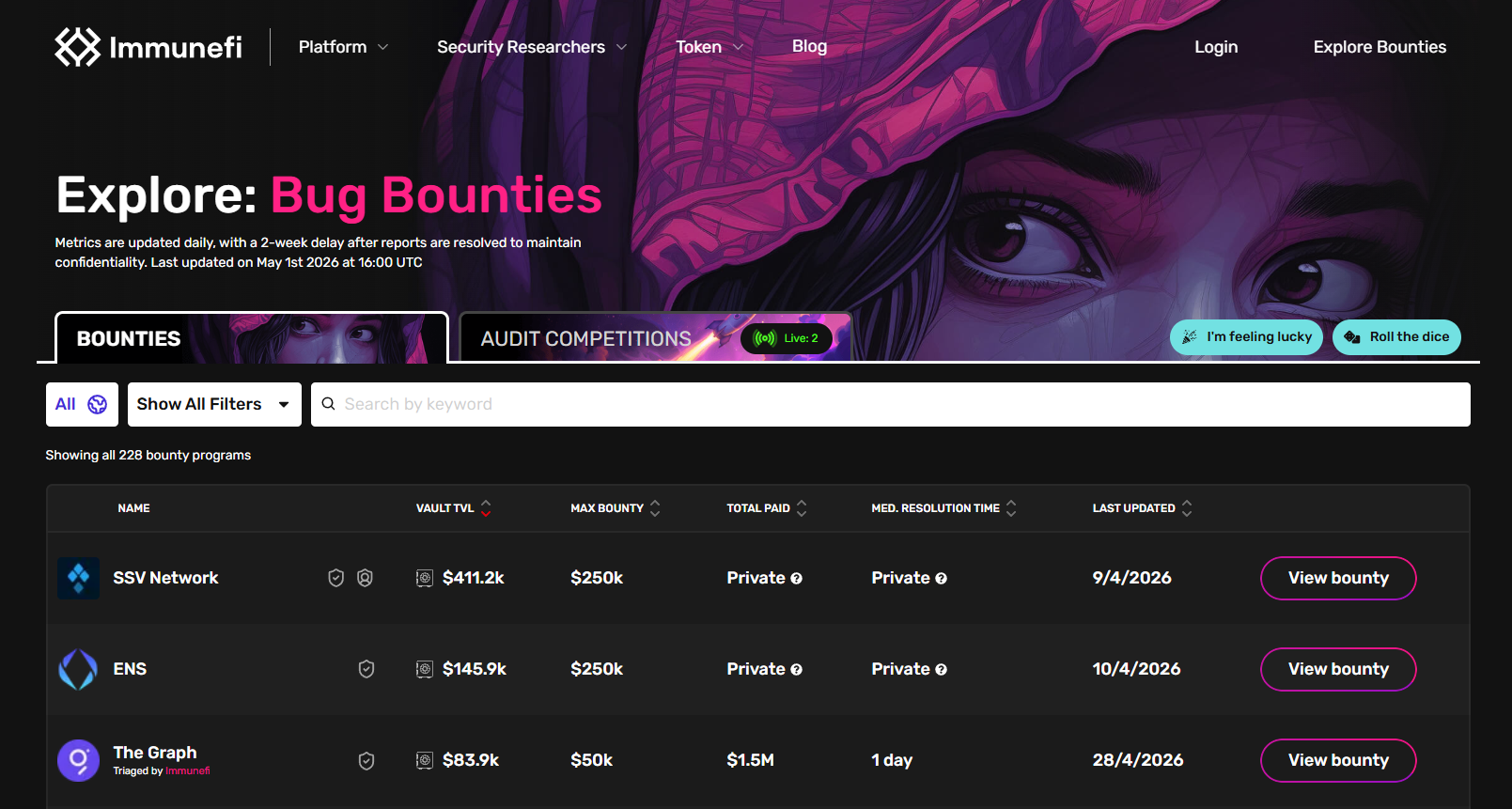

Immunefi is the largest platform in the space and was launched in December 2020. It has now handed out over $100 million to researchers on more than 3,000 bug reports, and claims to have helped prevent losses of more than $25 billion on at least 500 protocols by early 2026. The platform serves a community of about 45,000 registered security researchers, supports structured disclosure processes and intermediates the negotiations that occur between researchers and protocols on severity classification and payout values. Immunefi takes a cut of the bounty payouts, which reasonably aligns their incentives with both ends of the market: they do better when researchers find real bugs and protocols pay for them.

The largest single payout in the platform's history remains a $10 million reward paid in February 2022 to one of its researchers going by the name "satya0x" for finding an uninitialized proxy vulnerability in the Wormhole bridge. The vulnerability would, if threatened, have uncovered locked resources worth roughly $736 million. The researcher responsibly disclosed it and was awarded what was at the time the largest known bug bounty payment ever. In December 2021 Polygon had purchased a critical bug from researcher Leon Spacewalker for $2.2 million that could have enabled the minting of up to 9.3 billion MATIC tokens — equating to billions of dollars worth, at the time currency price points. The maths is quite clear both ways: the bounty was large in absolute terms and small in relative terms to what might have been lost from the attacks prevented.

Dedicated incentive programs run at the protocol level have now run into numbers that would have been hard to imagine just a few years ago. Maximum Payout is $15.5 Million for Critical Vulnerabilities in Uniswap's v4 program. LayerZero has open bounties of $15 million. In 2026, the maximum payout of the Ethereum Foundation raised to $1 million. Not only do these figures have to correlate to the worth of what these protocols are trying to secure, but they also need to scale with the caliber of researcher that you want attracting their attention — a cap of $1,000 will not incentivize someone able to exploit a game-breaking vulnerability in a multibillion dollar protocol.

Code4rena and Sherlock are slightly different, you host a competitive audit contest within a specified time frame, multiple researchers inspect the codebase at the same time and receipts of total prize pools based on finding severity and validity. With a community of +8,300 registered auditors and +24,000 unique findings on all Code4rena audits. There have been more than 175 such contests held by Sherlock. A competitive format changes the incentive structure; researchers are incentivized to look for problems that others may not glance over as findings in such notable amounts often receive only a small or no payout. Generally, this also reveals issues on a larger scale than either sequential audits or open-ended bounty programs can topics separately. Crypto programs for other Bitcoin Cash holders like Coinbase and MakerDAO still being hosted on github. Across all active bug bounty market in Web3 now well over USD 162M on offer of bounty should something not go as planned which represent both the size of what is at stake and an indication of the professionalisation path many researchers have journeyed down.

Analyzing Handicraft And Why It Is Not Easy Money

The image of your bug bounty hunter as someone who sees a vulnerability one afternoon and cashes in a six-figure payout is not borne out by the reality of the work. Its practitioners who earn a steady living researching security in the crypto space are usually specialists, whose route to becoming so entails many years of technical preparation in areas where most developers rarely venture.

Finding the highest-severity defects in production smart contract code requires expertise in the Ethereum Virtual Machine — not just knowing how to write Solidity, but understanding what it compiles into, what the EVM actually does while executing bytecode and what features of the language are guaranteed by or even known to be performed by its compiler versus their potential edge cases that can become security issues when coupled with gas optimization choices being made by cash-strapped developers under deadline. It requires not just an understanding of common vulnerability classes — reentrancy, integer overflow, flash loan manipulation, oracle price manipulation, access control failures, initialization vulnerabilities — but also the ability to detect new attack vectors that do not conform to any known patterns. The most useful findings are the ones that no one was even looking for.

The toolkit that researchers come with this work has matured significantly and now includes tools that are in most cases indistinguishable from what a malicious actor would deploy:

Slither, a static analysis framework developed by Trail of Bits, can scan your Solidity code against 40 known vulnerability patterns and give you an ordered output. It is a first step, it is not the last one — it has false positive and misses logic-layer vulnerabilities completely.

Mythril utilizes symbolic execution to traverse the possible states of a contract and checks if any reachable state violates certain security properties specified by the researcher. However, it is expensive and only allows for shallow analysis of complex contracts.

It is a property-based fuzzer: given a set of invariants that a contract should always satisfy, the researcher then passes them to Echidna which will pivot through thousands of random transaction sequences attempting to break them. We are especially good at searching for edge cases in arithmetic and state-management logic.

The majority of serious security research is now being done using Foundry as it has a very minimal mapping from 'I think this will work' → 'I can prove that this works', especially since most researchers write their tests and exploits in Solidity directly with foundry.

With Certora's formal verification tooling, researchers can use contract behavior specifications in the form of mathematical contracts, and then verify against the actual bytecode. This is the most challenging method and also the hardest to implement, in terms of skill and effort.

Another of the Trail of Bits tools, Manticore does dynamic analysis and can be used to automatically generate inputs that take certain code paths in the contract — something static analysis cannot reach.

There is no single tool that automatically finds critical vulnerabilities from either of these tools. They constrain the space of potential candidates and highlight those that a human must then assess. If a researcher cannot read EVM assembly, reason about transaction ordering and understand the economics of a DeFi protocol at a system level, they will be unable to do much with the output of any of these tools. These tools are amplifiers of expertise and not replacements for it.

These practical difficulties go far beyond the technical. Competition is intense. During an audit contest on Code4rena or Sherlock dozens of developers study the same codebase at once. One researcher typically claims a critical finding — everyone else who finds the same problem gets nothing or peanuts. A researcher who puts a week into a contest and only finds medium-severity issues may walk away with a couple hundred bucks. The income variability is high and the dry spells are true.

However, proving a result can be as difficult as solving it. One must make a practical demonstration of exploitability (usually with an actual proof-of-concept exploit). Some again dispute the severity classification of one critical or medium finding, arguing that certain aspects mitigate their status. Researchers who do not carefully document their work, and researchers who lack the leverage of power arising from reputation, may find themselves underpaid for important discoveries.

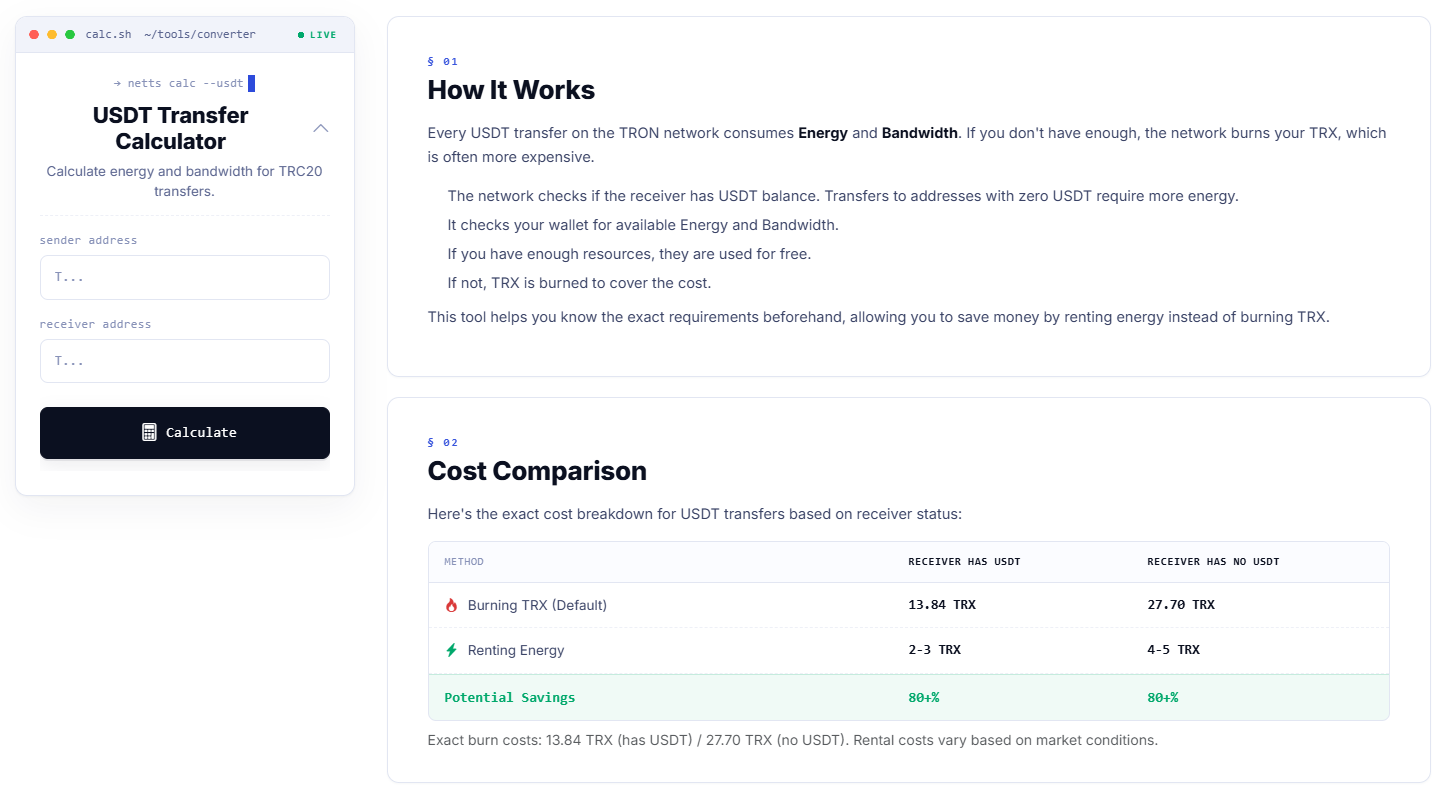

And finally, the legal and tax environment becomes a complication onto itself. The majority of the bounties are paid in some form of crypto — be it USDT, USDC, ETH or a protocol's native token. Taking a USDT transfer as income results in a taxable event creating a requirement for the recipient to track the value at receipt before converting it for reporting purposes and experience capital gains on any price movement if the payout was made through some type of digital asset. Bug bounties come with real overhead — setting up bug bounty practice, like any business, requires balancing tradeoffs between time invested and tangible results — if you ignore the administrative stuff it becomes another problem that compounds quietly.

But the deeper challenge is that this work has a high ceiling and a zero floor for earnings. A researcher that develops real expertise with a proven history — one who has discovered critical protocol vulnerabilities, whose name appears in audit reports / postmortems — can make money comparable to what senior engineering salaries are at major companies. It takes either a strange genetic precondition you have from birth, or years of honing a specific craft to get there. Almost anyone who tries to make a living only from it quickly discovers that the learning time is much longer than expected, the competition has prepared better than expected, and that while still training, income just isn't enough to cover expenses.

That does not necessarily mean it's a bad field. It makes it an honest one. The very skill set that allows a bug bounty researcher to succeed — deep technical focus, systems thinking, creativity in the face of uncertainty and willingness to work for hours on something that may provide no return on expenditure — is precisely the skill set desired by security practitioners across every domain. A person that has developed those skills to the point necessary for crypto security research is in the position to choose. One such career is the bug bounty path, and increasingly so for the few who pursue it.

This is great news for anyone running a USDT-based workflow — from settling on who gets paid what bounty, to splitting out payouts between multiple collaborators, or just moving the reward off protocol. The Netts USDT Transfer Calculator does just that: input the sender & receiver addresses and it tells you precisely how much Energy & Bandwidth will be needed for the transaction, what is burnt against the default or if existing resources are used along with a comparison of costs to send to an address already holding USDT (13.84 TRX vs 2-3 TRX for rented Energy), fresh (27.70 TRX vs 4-5 TRX rented resources) — over 80% saving either way!